Shaping an effective and equitable digital transformation in education requires a dual focus: facilitating digital change and mitigating its risks. Digitalisation must serve clear educational goals, such as personalisation, inclusivity for students with special needs, and enhancing social diversity. Despite the update of digital education strategies by most countries since 2020, there's a gap in aligning these with specific educational aims and leveraging digital tools for their achievement.

Effective governance involves ensuring access to a digital ecosystem that supports these goals, boosts confidence in using digital tools, protects personal data, addresses digital inequalities, and encourages the development of useful and affordable educational technologies. Key policy measures include promoting interoperability, enhancing privacy and data protection, utilising public procurement strategically, and establishing institutions to oversee digital education implementation, aiming to tackle various challenges simultaneously.

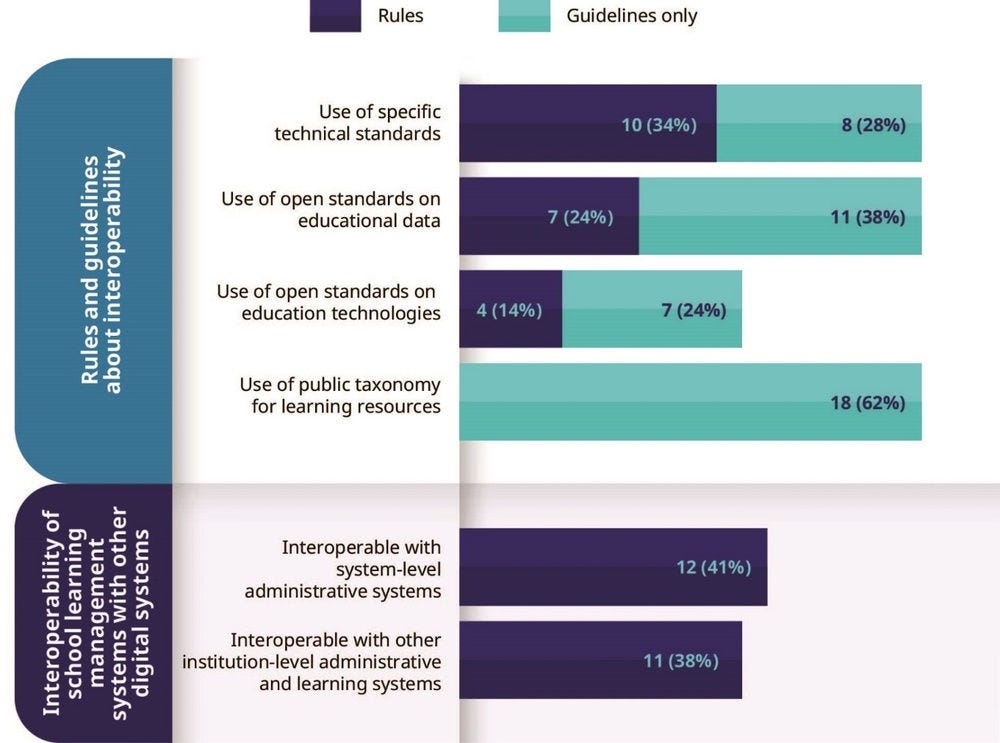

Interoperability enhances the consistency and exchangeability of data across different systems, streamlining processes by reducing the need for manual data re-entry, reformatting, or transformation. This facilitates more cost-effective and rapid delivery of information, supporting decision-making and actions. Without interoperable tools, data sharing becomes prone to errors and inefficient, consuming more time and resources. Therefore, interoperability is crucial for improving both the efficiency and effectiveness of digitalisation in education (Vincent-Lancrin and González-Sancho, 2023[45]).

Achieving system-wide interoperability in education requires adopting shared standards for technology, including technical specifications, data definitions, and system architecture models. It may also necessitate aligning organisational processes and establishing a legal framework to enable innovative use of educational data. Transitioning to a cohesive technology and data ecosystem involves addressing legacy systems with outdated standards, raising awareness of interoperability benefits, creating incentives and mandates for standard adoption, ensuring systems are sustainable and adaptable, and leveraging international initiatives.

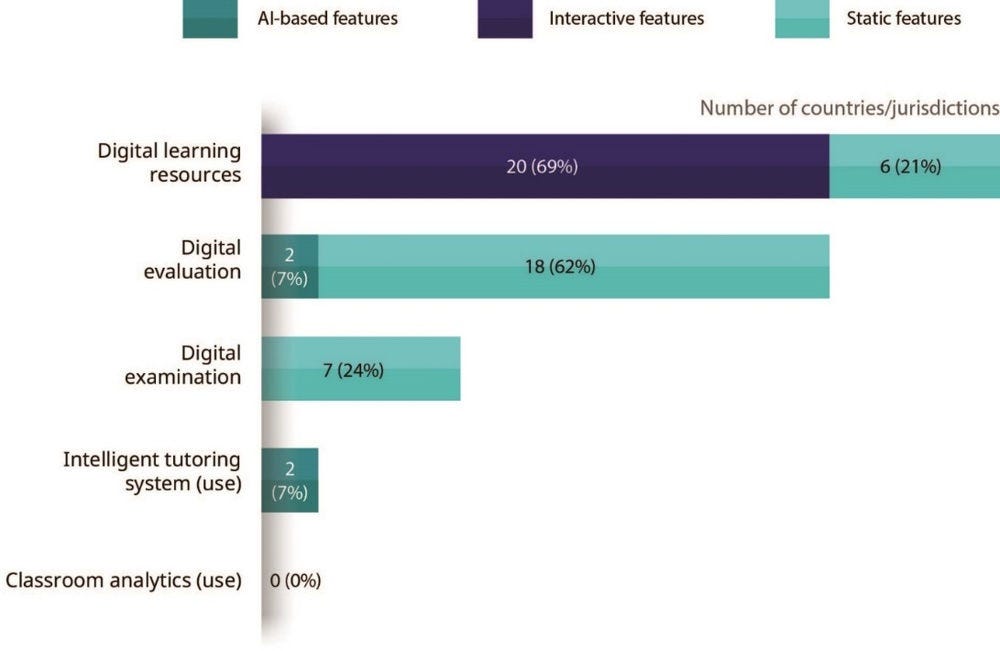

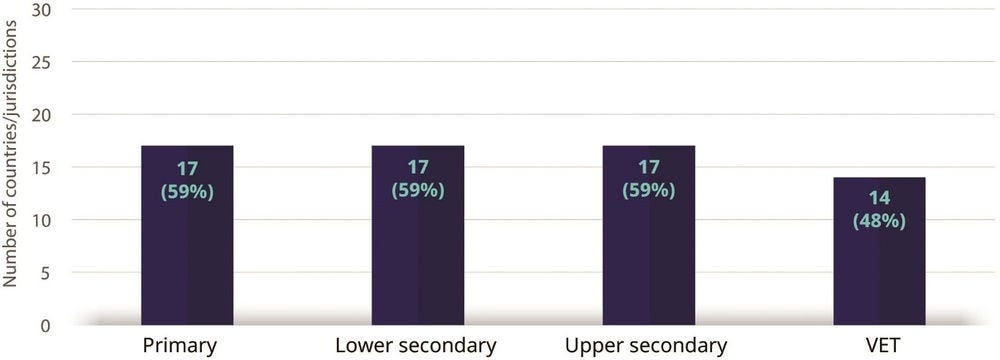

Accurate data on interoperability in education is scarce without comprehensive school surveys. Only a few countries have established mandatory interoperability standards for their administrative systems or for the semantic interoperability of digital learning resources. Currently, less than a third of countries report widespread interoperability between school learning management systems and other digital tools at the system or institution level, indicating significant room for improvement towards a fully effective digital education ecosystem.

While imposing regulations on technical interoperability may not be the best approach, there are growing technical solutions that promote interoperability. Governments can do more to enhance semantic interoperability for both administrative and educational content. Approximately two-thirds of countries recommend using taxonomies for organising learning resources, yet there's a need for more international standards focusing on content to further this effort.

Robust privacy and data protection measures are crucial for digital transformation in education, addressing potential risks and fostering trust in the use of data and AI. Protecting personal data against cyber threats and shielding children from inappropriate content are key components that require technical and human vigilance. The digitalisation of education, offering more personalised learning experiences, necessitates the careful handling of personal education records, which introduces privacy and security challenges.

All countries enforce privacy and data protection laws applicable to education, with many having specific regulations for educational data. Implementing a risk management framework that balances the benefits of educational data use with privacy concerns is essential. This approach involves moving beyond the unrealistic goal of eliminating all privacy risks to focus on managing data access, sharing, and use, combining data-focused and governance-focused strategies for better privacy protection. For instance, addressing biases and improving fairness in education may require the collection of personal data. In a fast-changing digital environment, some measure of flexibility in terms of regulation and guidance may be required to maintain the appropriate balance between innovation and protection.

Despite the existence of guidelines on privacy and data protection, active monitoring of their implementation in schools is rare. Privacy awareness campaigns and training are increasingly used to strengthen safeguards. Moreover, the potential for sharing data collected by commercial providers under data protection laws could lead to more innovation in digital education tools and resources. This underscores the need for a balanced approach to data governance that ensures privacy while enabling the beneficial use of educational data (Vincent-Lancrin and González-Sancho, 2023[46]).

As technology advances, automating decisions and collecting sensitive data like biometrics, the need for technology governance in education grows. This governance might involve obligations for using automated decision-making, restrictions on certain technologies, and requirements for transparency and expert examination of algorithms. As of 2024, few OECD countries regulate the use of technology and algorithms in education, with France being a notable exception, emphasising the need for explainable AI and restricting certain uses. The emergence of generative AI has prompted some countries to draft guidelines, with France and Korea moving towards specific regulations and the European Union progressing towards an AI Act that will enforce stricter controls on AI in high-risk sectors, including education.

A key principle in these guidelines is maintaining human oversight in AI decision-making to mitigate errors and biases, ensuring that humans have the final say, especially when AI performance is imperfect. This approach also advocates for providing non-digital alternatives to support inclusivity and offer opt-out options where feasible.