Access to diverse sources of information, plural and independent news sources, and free and open discourse are all needed to enable informed democratic debate. However, in today's digital and interconnected world, the spread of misinformation and disinformation poses a critical threat to the foundational elements of our societies. Its rapid proliferation undermines trust in institutions and elections, fuels societal divisions, and jeopardises public health initiatives, thereby threatening the very fabric of democracy and informed decision-making.

Mis- and disinformation

The spread of false and misleading information poses significant risks to the well-being of people and society. While such content is not necessarily illegal, it can contribute to polarisation, jeopardise the implementation of policies, and undermine trust in democratic institutions and processes. Action is required to strengthen the integrity of information spaces to protect freedom of expression and democratic engagement.

Key messages

New evidence from OECD shows that perceived ability to identify false and misleading content online is uncorrelated with measured ability, calling into question the use of perception surveys in this area. Respondents were able to correctly identify true and false content 60% of the time. True claims were on average more difficult to detect than false and misleading content. The OECD Truth Quest Survey measures the ability of people to identify false and misleading content online across 21 countries. In total, 40 765 people completed Truth Quest across five continents.

Governments must explore the constructive roles they can play in reinforcing the integrity of the information space through implementing policies to enhance the transparency, accountability, and plurality of information sources, including traditional media and online platforms. This includes promoting regulatory efforts, as applicable, to promote media pluralism and independence, as well as to encourage the accountability and transparency of online platforms by gathering more information on risks, management processes, algorithms, and data flows. Governments should also upgrade their institutional architecture, including through putting in place co-ordination mechanisms, strategic frameworks, and capacity-building programmes that support a coherent vision and approach to strengthening information integrity within the public administration.

New technologies present evolving opportunities and challenges to the information space. The development of the use of generative Artificial Intelligence will magnify changes to the information environment even further. AI solutions can accurately detect many types of false information and recognise disinformation tactics deployed through bots and deepfakes. At the same time, the technical limitations of AI and other technologies point to the need for a hybrid approach that integrates both human intervention and technological tools. Governments have a crucial role to play in facilitating collaboration between researchers, industry experts and the scientific community to develop and implement such approaches effectively.

As society becomes increasingly exposed to multiple sources of information, from traditional media to social media platforms, individuals need to be equipped with the tools and skills to navigate this complex environment. There is no silver bullet to combat mis- and disinformation but a long-term and systemic effort to build societal resilience through media, digital, and civic literacy should seek to empower individuals to cultivate critical thinking skills and to identify and counter the spread of false and misleading information.

Freedom of expression, media independence, and access to information are the cornerstones of democracy. A diverse, pluralistic and independent media sector, with an emphasis on local journalism, plays a key role as a watchdog for the public interest, helping to curb disinformation and hold state and non-state actors to account. Fostering a diverse and competitive media landscape requires limiting market concentration, promoting transparency and diversity of media ownership and editorial independence. Government support for a diverse and independent media sector is also recognised as a priority for international co-operation and development.

While people often source their news from social media, it is also the least trusted source of news with 57% of people on average not trusting it too much or at all as a reliable source of news. In contrast, only 9% trust news on social media a lot. On average across countries, those who trust social media a lot had a relatively lower Truth Quest score (54%) compared to those who trust news on social media not much or not at all (62%). The Truth Quest score is calculated as the total number of correct responses divided by the total number of claims seen on the OECD Truth Quest Survey. A country score is thus an average of all respondents’ results and expressed in percentages. The average is calculated as a simple average of the 21 country scores.

Context

Areas for future improvements to strengthen information integrity

The scale and speed of the proliferation of false and misleading content has made countries aware of the need to develop a comprehensive view of how to improve the level of integrity in the information space. To this end, governments are increasingly setting up or upgrading their co-ordination mechanisms. Most countries highlight the importance of better co-ordinating within and outside of government, as well as building their capacity to identify and respond to disinformation threats.

Media and information literacy initiatives should be seen as part of wider effort to reinforce information integrity

Media, information, and digital literacy initiatives often focus on giving people the tools to make conscious choices online, identify what is trustworthy, and understand platforms’ systems to use them for their own benefit. Media and information literacy should be part of a larger approach to building digital literacy, for example by focusing on elements related to addressing how algorithm recommendation systems and generative AI work, as well as civic education.

Trust in news from social media varies across age groups and countries

Trust in media is a key condition for democratic societies to function. While a range of news media sources are available, social media is becoming a key news source for many people. This indicator measures the share of adults who trust news from social media sites or apps. It provides a measure of trust in social media platforms as a reliable news source.

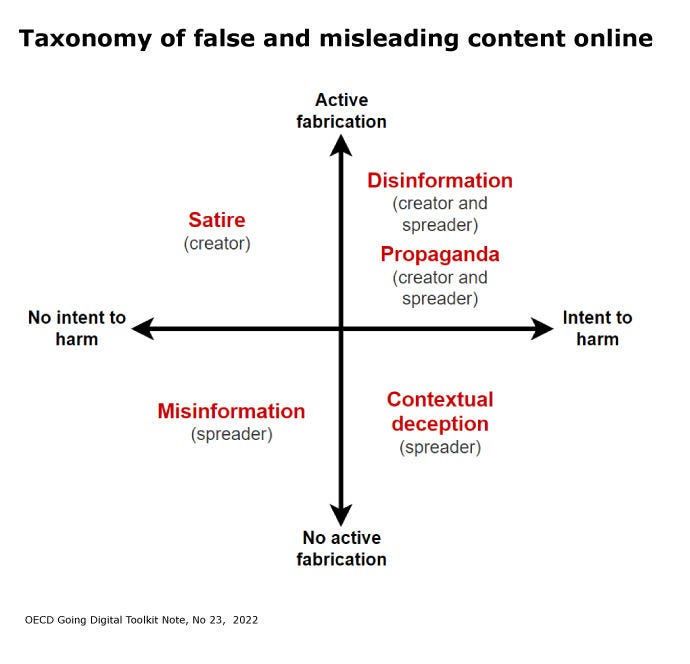

What counts as false or misleading digital content?

The challenge of disinformation is highly complex, and different content presents different levels of harm for those exposed to it. It is also critical to distinguish between the various formats of false or misleading content to help policy makers design well-targeted policies and facilitate measurement efforts to improve the evidence base. The OECD reviewed existing literature and proposed a typology of false and misleading content.

Latest insights

-

soundcloud.com18 November 2022

soundcloud.com18 November 2022 -

oecd.ai6 May 2022

oecd.ai6 May 2022

Related publications

-

20 April 2023

20 April 2023

Related events

-

oecd-events.org13-14 November 2023

oecd-events.org13-14 November 2023

Related policy issues

-

Child sexual exploitation and abuse (CSEA) online is a major global threat. It is complex, constantly evolving, and growing in scale. CSEA can take the form of images, videos or livestreams, it can involve a child being contacted by offenders who want to solicit or groom them – or it can be a combination of these. No matter what form CSEA takes, it brings about untold harm to the victims and survivors as well as to society.Learn more

-

The digital environment is fundamental in the lives of children, providing greater access to education and social connections. However, it can also expose them to a range of harms. Recognising that policy makers need to balance opportunities and risks, the OECD focuses on fostering a digital environment that safeguards children while allowing them to harness its benefits.Learn more

-

The impact of years of health, geopolitical and economic crises have heightened the urgency for governments to ensure accurate and timely information exchange and reconnect with citizens. Yet, amidst the challenges posed by an increasingly complex information environment, governments also find themselves presented with new avenues for public communication, stemming from the digital transformation.Learn more

-

The Internet has connected people like no other technology before it, but bad actors are just as skilled at using it as legitimate ones. That puts users’ safety and well-being, and therefore their trust, at risk. In response, more jurisdictions are turning to regulation. However, sound policies require a solid base of evidence, and we cannot lose sight of the benefits of being online while trying to ensure users’ safety.Learn more